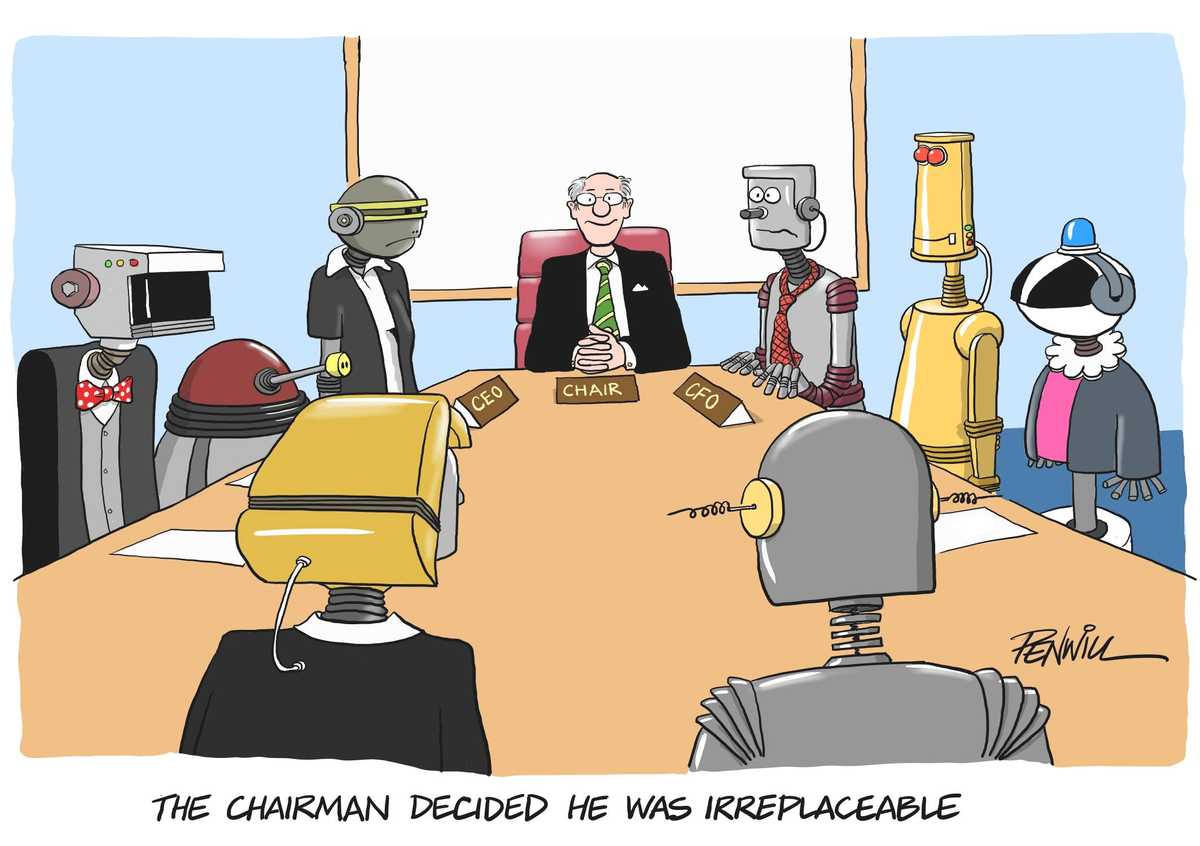

01 Jun NED Versus Machine

OK, so the NED role being taken over by a successor of the robotic kind may well be a generation away. Or maybe not? Assessing data, analysing trends and asking logical questions is largely possible for machines. They can even give advice, which is after all one of the main things boards do. Once the lack of emotional capacity is solved, who knows? It would certainly make succession planning easier!

The age of Artificial Intelligence is already here – and boards need to start thinking through how they are going to deal with it. AI is not just another product or service development: it will fundamentally change how organisations operate and interact with customers and suppliers – and change the nature of relationships with stakeholders such as employees, regulator and investors. The timing and impact may be uncertain, but the changes will be so far-reaching and fundamental that boards need to start considering what their worlds – internal and external – might look like in the not too distant future. Below we outline a few things for boards to start thinking about, based on the conversations at a recent seminar we organised with an author and businessman who is an AI expert.

Good practices to consider…

As a first step, create the space and time to think through what the impact might be on all aspects of the organisation. AI is no longer a futuristic dream – it’s rapidly becoming part of how you run your business and it has many applications. How could AI impact the way you operate? What are the implications for the products and services you offer? How will you interact with your customers, market to them, and predict their behaviour? What are your competitors doing and what are the new risks?

Things to avoid…

Delaying the thinking until you become a laggard. Things are moving fast. The lack of emotional intelligence or ability to learn from single instances or apply common sense are well-recognised limitations – but once this starts to be resolved, the game changes massively. And it is already moving fast. A tentative step at next year’s strategy away day is unlikely to be enough, especially as it probably won’t move you far down the road and waiting months for another shot at it will be the wrong response.

Good practices to consider…

Challenge around how AI is used to engage with the customer. Can bots effectively answer customer queries at any time of day? Is enough being done to target different market segments? Can stock and resources be managed more efficiently by predicting customer behaviour?

Things to avoid…

Not recognising the opportunity. Using AI effectively can be about thinking outside the box – something NEDs might be better positioned to do than management who are focussed on running the business day to day. NEDs might also be able to bring cross-industry experience that can be applied in a different way in your sector.

Good practices to consider…

Be comfortable with not getting a definitive answer. There are few limits on where AI might have an impact, whether it’s reducing your organisation’s energy consumption, resolving insurance claims or just answering the phone more intelligently and less annoyingly than your competitors. No one is entirely sure how far and how fast the applications will emerge but what is certain is that it will mean stepping outside the current norms and ways of thinking and doing business.

Things to avoid…

Just saying “we’ll wait and see once we know more”. Of course, there are a lot of unknowns. And some – possibly many – of the scenarios you consider will turn out to be wide of the mark. But some won’t. And just going through the process will give a much better understanding of what AI is all about.

Good practices to consider…

Bring in the people who really understand the possible opportunities and consequences and will challenge your thinking. Recognise that most of you are unlikely to be experts and boards really need to hear from someone who is up to date… That doesn’t just mean listening to the in-house view. Valuable though that may be, this game is all about thinking in new ways and learning from what is being achieved by experts outside.

Things to avoid…

Adopting the position of “yes, we had AI on the board agenda a few meetings ago”. This is complicated stuff and it’s not enough to “ask sensible questions” from first principles. You’re running the risk of not recognising the opportunities – or where you’re falling behind: boards need serious, in-depth briefings and experts working alongside management so that the external view can be applied to the real-life implications for your organisation. And a one-off, one-hour session isn’t going to cut it: more regular briefing is likely to be needed to keep you up to speed.

Good practices to consider…

Focus on the implications for your staff. It may be about hiring AI specialists (who are increasingly expensive), but don’t forget to make sure your own workforce are being upskilled.

Things to avoid…

Failing to integrate AI with your human staff! There’s little point making a big cost-saving or profit boosting investment if no one can get the best out of it. And, don’t forget the cultural angle. People will likely be resistant to change and there needs to be a strategy for overcoming this resistance.

Good practices to consider…

Work out how – and in what way – the Board will need to provide challenge and oversight. The consequences, risks and opportunities are wide-ranging, and boards will need a coherent view (“oversight map?”) of what they’ll need to understand and discuss with management.

Things to avoid…

Hoping there’s a fellow tech-savvy NED you can rely on. Doing that is to misunderstand AI in the first place. Yes, the data and intelligence behind AI is, of course, based on new technologies. But it’s the impact on people, products and the organisation that a board needs to be on top of. And that makes it fully a whole-board responsibility. This isn’t something that can be delegated. Maybe the solution is to work out whether individual NEDs can become owners and specialists of different aspects of the AI strategy and risk management.

Good practices to consider…

Make it a regular board agenda item, possibly for every meeting. A board needs to know that management are staying on top of developments. It might make sense to split up the Board’s review into themes within the overall AI framework. Maybe one meeting can focus on customers and competitors, one on products and services and another on internal processes and management…

Things to avoid…

Relying on covering AI through a once or twice-yearly agenda item, where all the different elements are lumped together under a single “AI” agenda item. It’s not like that.

Good practices to consider…

Work out which board committee is going to take responsibility for more in-depth review. If it’s decided there’s no natural home among the committees and it needs to be a strategic item covered by the full Board, the time will have to be created in the board agenda to make sure it gets the thorough discussion it merits.

Things to avoid…

Lumping it in with cyber and giving it to the Audit (Risk) Committee. This is about strategy, not just data, systems and financial management and reporting. It will cross all committees. If a more detailed review is needed than is possible at a board meeting, is now the time to get ahead of the game and set up an AI Committee? We haven’t seen one yet but perhaps its time has come.

Good practices to consider…

Understand who is taking responsibility across the executive team – and how the ExCo is taking collective responsibility. There may be one person in the lead (and that should probably be the CEO), but all executives must be considering the opportunities and impact in their areas.

Things to avoid…

Leaving it to the CIO to bring AI to the Board. Yes, he/she might be the right person for explaining the technology environment and how it all works technically. But they are unlikely to be best placed to discuss the implications across the organisation and its products, markets and people.

Good practices to consider…

Assess progress, agility and mindset. The biggest challenge may turn out to be not technological but getting the organisation to change quickly enough. But such intangible angles are difficult to assess and measure: how will the Board get an objective, reliable view?

Things to avoid…

Assuming that the organisation can change by itself, and then thinking that just because there’s an “AI initiative” underway, that’s going to be enough. Boards should be hearing already from CEOs about how they are going to manage the impact of AI – and also how they’re making sure the organisation is nimble enough. They should be working out a way of assessing progress rather than just relying on assurances from the CEO or CIO.

Good practices to consider…

Start thinking through the risks and flipsides. Use of AI and big data brings with it complex ethical and legal questions. For example, if decisions are based on data, is the decision-making sound without emotional intervention? What about those instances when policy needs to be overridden by common sense? What if Mr Robot gets it wrong and there are consequences for customers or employees? What’s the liability and the reputation risk? Who judges the reliability? And how is that different from human decision-making?

Things to avoid…

Leaving the business alone to start exploiting AI as they see fit. There are potentially huge implications for the organisation’s risks and risk management. Business leaders – including the Board – need to understand and have confidence in how AI makes decisions. To go too slowly is to risk being left behind, but launch in too fast and without controls, and the Board may be facing a major reputational scandal or legal attack.

Download This Post

To download a PDF of this post, please enter your email address into the form below and we will send it to you straight away.